4 changed files with 340 additions and 159 deletions

Split View

Diff Options

-

BINentries/web-development/media/websiteOptimization/beforeImprovements.png

-

BINentries/web-development/media/websiteOptimization/serverCache.png

-

+0 -159entries/web-development/node-website-as-fast-as-possible.md

-

+340 -0entries/web-development/node-website-optimization.md

BIN

entries/web-development/media/websiteOptimization/beforeImprovements.png

View File

BIN

entries/web-development/media/websiteOptimization/serverCache.png

View File

+ 0

- 159

entries/web-development/node-website-as-fast-as-possible.md

View File

| @ -1,159 +0,0 @@ | |||

| ## Results | |||

| ## Optimize Images | |||

| Since images are the largest portion of a website's size, optimizing and reducing the | |||

| size of images will greatly decrease load time. In a perfect web development world, everyone would | |||

| use SVG images which are extremely small and don't need compression. However, since most of us | |||

| use PNG and JPEG images I wrote a script to automatically optimize images for the web. | |||

| ``` | |||

| #!/bin/bash | |||

| # Simple script for optimizing all images for a website | |||

| # | |||

| # @author Jeffery Russell 7-19-18 | |||

| WIDTH="690>" # the ">" tag specifies that images will not get scaled up | |||

| folders=("./entries" "./img") | |||

| for folder in "${folders[@]}"; do | |||

| for f in $(find $folder -name '*.jpg' -or -name '*.JPG'); do | |||

| convert "$f" -resize $WIDTH "$f" | |||

| jpegoptim --max=80 --strip-all --preserve --totals --all-progressive "$f" | |||

| done | |||

| for f in $(find $folder -name '*.png' -or -name '*.PNG'); do | |||

| convert "$f" -resize $WIDTH "$f" | |||

| optipng -o7 -preserve "$f" | |||

| done | |||

| done | |||

| ``` | |||

| When ran, this script will go through the img, and entries folder recursively and optimize all | |||

| the images in there. If an image is more than 690px wide, it will scale it down to save size. In | |||

| most cases, it is useless to have images with a width greater than 690 because it will just get | |||

| scaled by the client's web browser. | |||

| If you are running a Debian based linux distro, you can download the dependencies for this script with | |||

| the following commands: | |||

| ``` | |||

| apt-get install jpegoptim | |||

| apt-get install optipng | |||

| ``` | |||

| The goal of this script is to make most of the images under 100kb for the web. It is ok to have | |||

| a few images above 100k, however, you should really avoid having images above 200kb. | |||

| ## Take advantage of Async calls | |||

| One of the largest benefits of node is its async abilities where code is executed in a | |||

| multi-threaded fashion. This can become a callback hell if not handled correctly, but, with | |||

| good code structure it can become very easy. When code is executed in parallel, you can greatly | |||

| decrease run time by doing other stuff while waiting on costly file IO or database calls. | |||

| The problem with async code is that it is hard to coordinate. Node has a lot of ways to handel | |||

| synchronization, but, I prefer to use [Promises](https://developer.mozilla.org/en-US/docs/Web/JavaScript/Reference/Global_Objects/Promise) | |||

| . Here is a simple example where async code can become inefficient. | |||

| Good Code Async: | |||

| ``` | |||

| Promise.all([includes.printHeader(), | |||

| require(file).main(filename, request), | |||

| includes.printFooter()]).then(function(content) | |||

| { | |||

| res.write(content.join('')); | |||

| res.end(); | |||

| }).catch(function(err) | |||

| { | |||

| console.log(err); | |||

| }); | |||

| ``` | |||

| Bad Async Code: | |||

| ``` | |||

| includes.printHeader(res).then(function() | |||

| { | |||

| return require(file).main(res, filename, request); | |||

| }).then(function() | |||

| { | |||

| return includes.printFooter(res); | |||

| }).catch(function(err) | |||

| { | |||

| console.log(err); | |||

| }) | |||

| ``` | |||

| In the first example three blocks of async code are executed in parallel and in the second example | |||

| three blocks of async code are executed one after another. Many people may initially do the second | |||

| option because it may seem like you have to create and render the footer after you render the header | |||

| and body of the page. | |||

| A great way to handel async calls is by having most of your methods returning promises which resolve to | |||

| the HTML or DB information that they produce. When you run Promise.all, it returns an array of the | |||

| objects which enables you to preserve the order ie header, body, footer. After you do this for all of | |||

| your code, it creates a "perfect" async tree which actually runs very fast. | |||

| Another Good Async Example: | |||

| ``` | |||

| /** | |||

| * Calls posts and sidebar modules to render blog contents in order | |||

| * | |||

| * @param requestURL | |||

| * @returns {Promise|*} | |||

| */ | |||

| main: function(requestURL) | |||

| { | |||

| return new Promise(function(resolve, reject) | |||

| { | |||

| Promise.all([renderPost(requestURL), | |||

| require("../sidebar/sidebar.js").main()]).then(function(content) | |||

| { | |||

| resolve(content.join('')); | |||

| }).catch(function(error) | |||

| { | |||

| reject(error); | |||

| }) | |||

| }); | |||

| } | |||

| ``` | |||

| ## Client Side Caching | |||

| ``` | |||

| var eTag = crypto.createHash('md5').update(content).digest('hex'); | |||

| result.writeHead(200, {'Content-Type': 'text/css', 'Cache-Control': | |||

| 'public, max-age=2678400', 'ETag': '"' + eTag + '"', | |||

| 'Vary': 'Accept-Encoding'}); | |||

| result.write(content); | |||

| result.end(); | |||

| cache.put(path, content); | |||

| ``` | |||

| ## Server Side Caching | |||

| ## Enable Compression (GZIP) | |||

| ## Purge Unused CSS Definitions | |||

| ## Minify CSS and Javascript | |||

+ 340

- 0

entries/web-development/node-website-optimization.md

View File

| @ -0,0 +1,340 @@ | |||

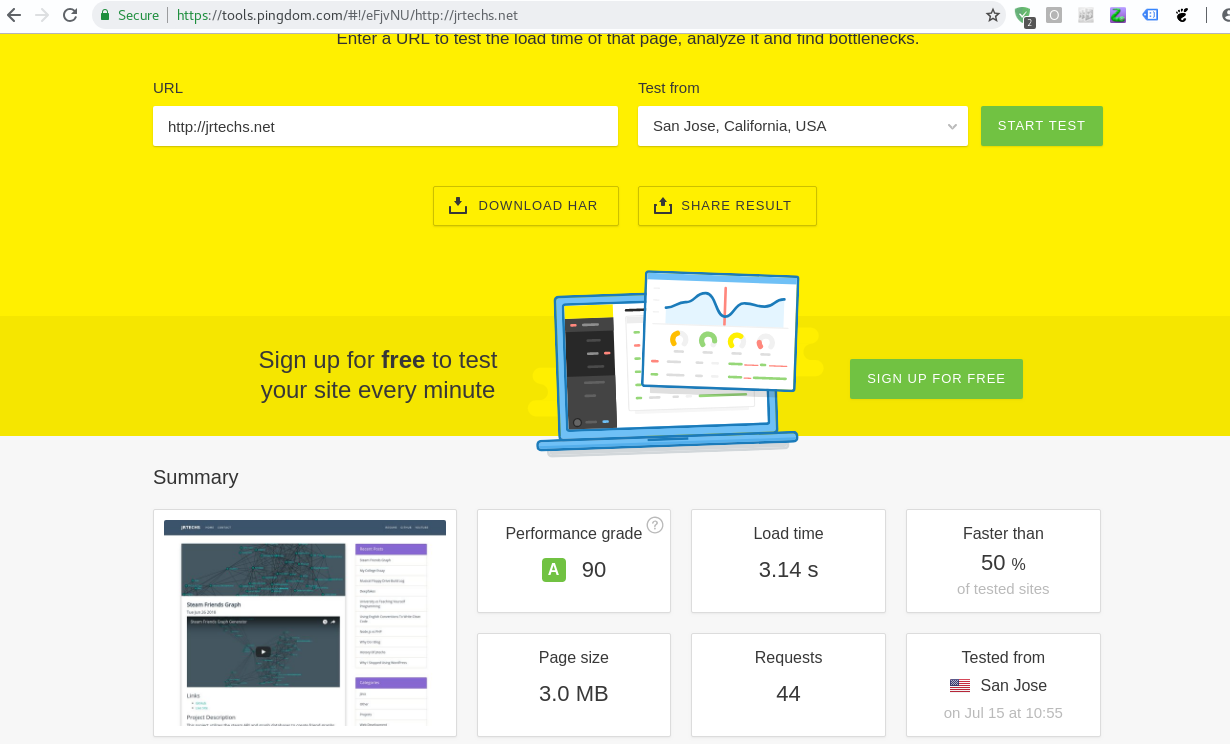

| It is a well known fact that a fast website is critical towards having high user retention. | |||

| Google looks favorable upon websites which are well optimized and fast. If you are using | |||

| a CMS like WordPress or Wix, a lot of optimization is done automatically. If you like to | |||

| build stuff from scratch like me, there is a ton of work required to optimize a website. | |||

| This post will cover the 8 things that I did to decrease the load time of this node blog | |||

| by two seconds. | |||

| #### After Optimization | |||

|  | |||

| #### Before Optimization | |||

|  | |||

| ## 1: Optimize Images | |||

| Since images are the largest portion of a website's size, optimizing and reducing the | |||

| size of images will greatly decrease load time. In a perfect web development world, everyone would | |||

| use SVG images which are extremely small and don't need compression. However, since most of us | |||

| use PNG and JPEG images I wrote a script to automatically optimize images for the web. | |||

| ``` | |||

| #!/bin/bash | |||

| # Simple script for optimizing all images for a website | |||

| # | |||

| # @author Jeffery Russell 7-19-18 | |||

| WIDTH="690>" # the ">" tag specifies that images will not get scaled up | |||

| folders=("./entries" "./img") | |||

| for folder in "${folders[@]}"; do | |||

| for f in $(find $folder -name '*.jpg' -or -name '*.JPG'); do | |||

| convert "$f" -resize $WIDTH "$f" | |||

| jpegoptim --max=80 --strip-all --preserve --totals --all-progressive "$f" | |||

| done | |||

| for f in $(find $folder -name '*.png' -or -name '*.PNG'); do | |||

| convert "$f" -resize $WIDTH "$f" | |||

| optipng -o7 -preserve "$f" | |||

| done | |||

| done | |||

| ``` | |||

| When ran, this script will go through the img, and entries folder recursively and optimize all | |||

| the images in there. If an image is more than 690px wide, it will scale it down to save size. In | |||

| most cases, it is useless to have images with a width greater than 690 because it will just get | |||

| scaled by the client's web browser. | |||

| If you are running a Debian based linux distro, you can download the dependencies for this script with | |||

| the following commands: | |||

| ``` | |||

| apt-get install jpegoptim | |||

| apt-get install optipng | |||

| ``` | |||

| The goal of this script is to make most of the images under 100kb for the web. It is ok to have | |||

| a few images above 100k, however, you should really avoid having images above 200kb. | |||

| ## 2: Take advantage of Async calls | |||

| One of the largest benefits of node is its async abilities where code is executed in a | |||

| multi-threaded fashion. This can become a callback hell if not handled correctly, but, with | |||

| good code structure it can become very easy. When code is executed in parallel, you can greatly | |||

| decrease run time by doing other stuff while waiting on costly file IO or database calls. | |||

| The problem with async code is that it is hard to coordinate. Node has a lot of ways to handel | |||

| synchronization, but, I prefer to use [Promises](https://developer.mozilla.org/en-US/docs/Web/JavaScript/Reference/Global_Objects/Promise) | |||

| . Here is a simple example where async code can become inefficient. | |||

| Good Code Async: | |||

| ``` | |||

| Promise.all([includes.printHeader(), | |||

| require(file).main(filename, request), | |||

| includes.printFooter()]).then(function(content) | |||

| { | |||

| res.write(content.join('')); | |||

| res.end(); | |||

| }).catch(function(err) | |||

| { | |||

| console.log(err); | |||

| }); | |||

| ``` | |||

| Bad Async Code: | |||

| ``` | |||

| includes.printHeader(res).then(function() | |||

| { | |||

| return require(file).main(res, filename, request); | |||

| }).then(function() | |||

| { | |||

| return includes.printFooter(res); | |||

| }).catch(function(err) | |||

| { | |||

| console.log(err); | |||

| }) | |||

| ``` | |||

| In the first example three blocks of async code are executed in parallel and in the second example | |||

| three blocks of async code are executed one after another. Many people may initially do the second | |||

| option because it may seem like you have to create and render the footer after you render the header | |||

| and body of the page. | |||

| A great way to handel async calls is by having most of your methods returning promises which resolve to | |||

| the HTML or DB information that they produce. When you run Promise.all, it returns an array of the | |||

| objects which enables you to preserve the order ie header, body, footer. After you do this for all of | |||

| your code, it creates a "perfect" async tree which actually runs very fast. | |||

| Another Good Async Example: | |||

| ``` | |||

| /** | |||

| * Calls posts and sidebar modules to render blog contents in order | |||

| * | |||

| * @param requestURL | |||

| * @returns {Promise|*} | |||

| */ | |||

| main: function(requestURL) | |||

| { | |||

| return new Promise(function(resolve, reject) | |||

| { | |||

| Promise.all([renderPost(requestURL), | |||

| require("../sidebar/sidebar.js").main()]).then(function(content) | |||

| { | |||

| resolve(content.join('')); | |||

| }).catch(function(error) | |||

| { | |||

| reject(error); | |||

| }) | |||

| }); | |||

| } | |||

| ``` | |||

| ## 3: Client Side Caching | |||

| Client side caching is where the client's web browser stores static content they | |||

| receive from your website. For example, | |||

| if a client caches a downloaded css style sheet, they won't have to download it again for the next page | |||

| they visit on your website with that style sheet. | |||

| You should cache all images, Javascript and css files since those typically don't change. | |||

| It is a good idea to set the expiration date of the cache to be something longer than a week, I typically | |||

| set mine for a month. | |||

| In order for a web browser to accept and cache files, you must set some tags in the HTTP header of the | |||

| response which you send to the client. In this header you must specify the content type, some cache variables | |||

| like its max age. You also must assign a ETag to the header to give the client a way to verify the content | |||

| of the cache. This enables the client to detect if there was a change to the file. Some people set the ETag | |||

| equal to the version of the stylesheet or javascript, but, it is far easier to just set it equal to the hash of the | |||

| file. I use md5 to hash the files since it is fast and I'm not worried about hash collisions for this particular | |||

| application. | |||

| You can do this in NGINX if you use it to serve static files, but, you can also do it | |||

| in Node. | |||

| #### Caching CSS | |||

| ``` | |||

| var eTag = crypto.createHash('md5').update(content).digest('hex'); | |||

| result.writeHead(200, {'Content-Type': 'text/css', 'Cache-Control': | |||

| 'public, max-age=2678400', 'ETag': '"' + eTag + '"', | |||

| 'Vary': 'Accept-Encoding'}); | |||

| result.write(content); | |||

| result.end(); | |||

| ``` | |||

| #### Caching Images | |||

| ``` | |||

| var eTag = crypto.createHash('md5').update(content).digest('hex'); | |||

| result.writeHead(200, {'Content-Type': 'image/png', | |||

| 'Cache-Control': 'public, max-age=2678400', | |||

| 'ETag': '"' + eTag + '"'}); | |||

| result.write(content); | |||

| result.end(); | |||

| ``` | |||

| ## 4: Server Side Caching | |||

| Even with the best async server, there are still ways to improve performance. If | |||

| you cache all the static pages that you generate in a HashMap, you can quickly access | |||

| it for the next web user without ever having to query the database or read files. | |||

| ``` | |||

| const cache = require('memory-cache'); | |||

| var html = cache.get(filename); | |||

| if(html == null) | |||

| { | |||

| // Generate page contents | |||

| Promise.all([includes.printHeader(), | |||

| require(file).main(filename, request), | |||

| includes.printFooter()]).then(function(content) | |||

| { | |||

| res.write(content.join('')); | |||

| res.end(); | |||

| cache.put(filename, content.join('')); | |||

| }) | |||

| } | |||

| else | |||

| { | |||

| res.write(html); | |||

| res.end(); | |||

| } | |||

| ``` | |||

| I found that it is the fastest to cache everything from static html pages, CSS, JavaScript, | |||

| and Images. For a larger site this may consume a boat load of ram, but, storing images in a HashMap | |||

| greatly reduce load time since you don't need to read the file from a disk. For a smaller node application | |||

| like my blog the benefits of using the server side cache nearly cut my load time in half. | |||

| Make sure that you don't accidentally cache a dynamic page like the CMS page in your admin section. | |||

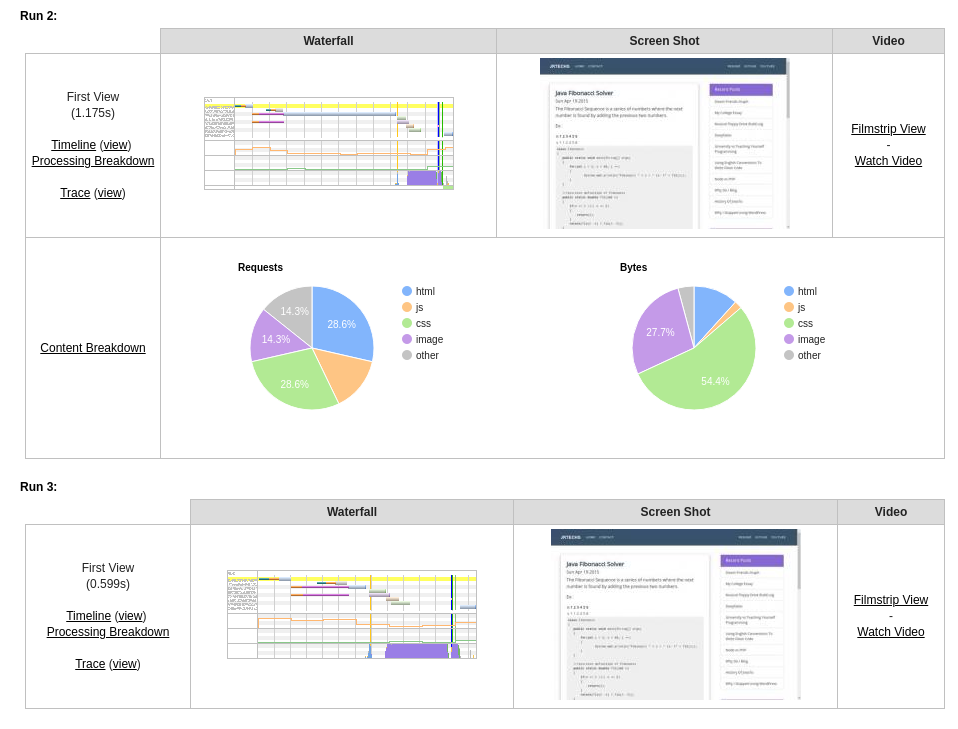

| To demonstrate the performance increase of this method, I restarted my web server (clearing the cache) and ran | |||

| a speed test which ran three trials. The first two trials were slow since the server didn't cache anything. However, | |||

| the third trial ran extreamly fast since all the contents were in the server's cache. | |||

|  | |||

| ## 5: Enable Compression | |||

| Compressing content before it is transferred over the internet can significantly decrease the loading time of your | |||

| website. The only trade off of this approach is that it takes more CPU resources, however, it is well worth it for the | |||

| performance gains. Using Gzip on CSS and html can reduce the size by 60-70%. | |||

| If you are running an NGINX server, you can enable Gzip there. There is also a simple node module which will | |||

| use Gzip compression on an Express app. | |||

| #### Gzip on Express App | |||

| ``` | |||

| npm install compression | |||

| var compression = require('compression') | |||

| app.use(compression()); | |||

| ``` | |||

| ## 6: Remove Unused CSS Definitions | |||

| If you use a css library like Bootstrap or W3CSS, you will have a ton of css classes which go | |||

| unused. The standard BootStrap css file is around 210kb. After I removed unused CSS definitions | |||

| the size of the BootStrap file was only 16kb. | |||

| There are tons of tools online, however, for my blog I used PurgeCSS which is a | |||

| node library. | |||

| This command will install PurgeCSS for CLI (command line interface). | |||

| ``` | |||

| npm i -g purgecss | |||

| ``` | |||

| This is an example of how you would use PurgeCSS to remove unused css definitions. | |||

| ``` | |||

| purgecss --css css/app.css --content src/index.html --out build/css/ | |||

| ``` | |||

| PurgeCSS CLI options. | |||

| ``` | |||

| purgecss --css <css> --content <content> [option] | |||

| Options: | |||

| --con, --content glob of content files [array] | |||

| -c, --config configuration file [string] | |||

| -o, --out Filepath directory to write purified css files to [string] | |||

| -w, --whitelist List of classes that should not be removed | |||

| [array] [default: []] | |||

| -h, --help Show help [boolean] | |||

| -v, --version Show version number [boolean] | |||

| ``` | |||

| This is not the ideal solution since some CSS definitions may be used on some pages | |||

| yet unused on other pages. When running this command be sure to select a page which | |||

| uses all of your CSS to prevent loosing some CSS styling on certain pages. | |||

| You don't have to use this through the command line, you can run this directly in your | |||

| node app to make this automated. Check out their [documentation](https://www.purgecss.com/) to | |||

| learn more. | |||

| ## 7: Minify CSS and Javascript | |||

| This is possibly the easiest thing you can do to reduce the size of your website. | |||

| Essentially you just run your CSS and JavaScript through a program which strips out | |||

| all unnecessary characters. | |||

| Ex Of Minified CSS: | |||

| ``` | |||

| .bg-primary{background-color:#3B536B!important}#mainNav{font-family:Montserrat,'Helvetica Neue',Helvetica,Arial,sans-serif;font-weight:700;text-transform:uppercase;padding-top:15px;padding-bottom:15px}#mainNav .navbar-nav{letter-spacing:1px}#mainNav .navbar-nav li.nav-item a.nav-link{color:#fff}#mainNav .navbar-nav li.nav-item a.nav-link:hover{color:#D2C0FF;outline:0}#mainNav .navbar-toggler{font-size:14px;padding:11px;text-transform:uppercase;color:#fff;border-color:#fff}.navbar-toggler{padding:.25rem .75rem;font-size:1.09375rem;line-height:1;background-color:transparent;border:1px solid transparent;border-radius:.25rem}.table .thead-dark{color:#fff;background-color:#513E7D;border-color:#32383e}footer{color:#fff}footer h3{margin-bottom:30px}footer .footer-above{padding-top:50px;background-color:#3B536B}footer .footer-col{margin-bottom:50px}footer .footer-below{padding:25px 0;background-color:#3B536B} | |||

| ``` | |||

| There are Node libraries which can minify CSS and Javascript, however, if you are really | |||

| lazy, just use a website like [this](https://cssminifier.com/). | |||

| ## 8: Keep Minimal JavaScript | |||

| Ignoring the amount of Node dependencies you have, it is critical to minimize | |||

| the amount of dependencies the client needs. I was able to completely remove | |||

| BootStrap's javascript and Jquery by simply writing a javascript function for my nav bar. | |||

| This reduced the size of my website by 100kb. | |||

| ``` | |||

| const e = document.querySelector(".navbar-toggler"); | |||

| const t = document.querySelector(".navbar-collapse"); | |||

| e.onclick = function() | |||

| { | |||

| if (e.getAttribute("aria-expanded") == "false") | |||

| { | |||

| t.classList.remove('collapse'); | |||

| e.setAttribute('aria-expanded', true); | |||

| } | |||

| else | |||

| { | |||

| e.setAttribute("aria-expanded", false); | |||

| t.classList.add('collapse'); | |||

| } | |||

| } | |||

| ``` | |||

| You should also debate how much you need 3rd party scripts like Google Analytics. | |||

| In most cases people don't full take advantage of Google Analytics, a simple backend analytics | |||

| service would work just as good while saving the client load time. | |||

| ## Resources | |||

| - [Pingdom Speed Test](https://tools.pingdom.com/) | |||

| - [Google Website Speed Test](https://developers.google.com/speed/pagespeed/insights/) | |||

| - [Code to My "Optimized" Node Blog](https://github.com/jrtechs/NodeJSBlog) | |||

| - [Purge CSS](https://www.purgecss.com/) | |||

| - [CSS and JavaScript Minifier](https://www.minifier.org/) | |||